Summary

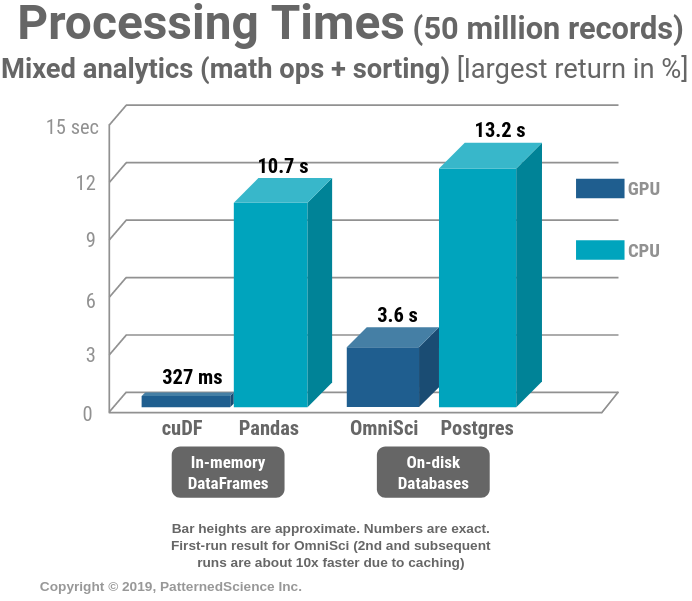

GPUs are known to significantly accelerate machine learning model training speeds, especially when using deep learning libraries like TensorFlow. But did you know that there are now solid options to also accelerate data analytics workloads, BI tools and dashboards with the help of GPUs? Join us for a presentation of performance benchmarks of GPU-based options and their CPU-based counterparts. We compare the performance that one could get from OmniSci Core DB (a GPU database) compared to the performance of Postgres DB (for data analytics) and PDAL (for LiDAR processing). On the in-memory side, we benchmark cuDF (NVIDIA’s GPU DataFrame) against the widely popular Pandas DataFrame. We will share results and include some code walk-throughs and live benchmarking. Coming out of this technical talk, you will have insight regarding how GPUs can accelerate your data analytics and geospatial workloads.